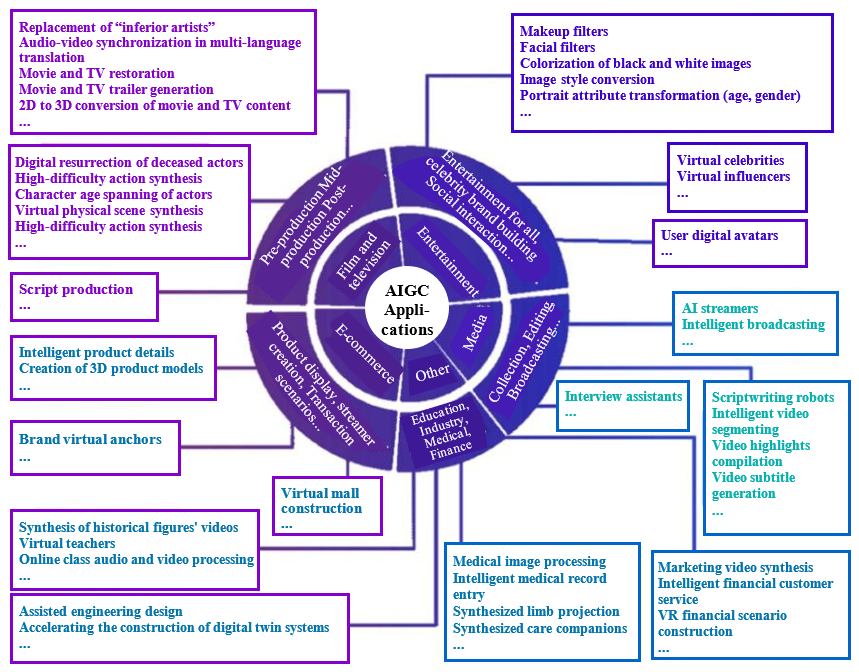

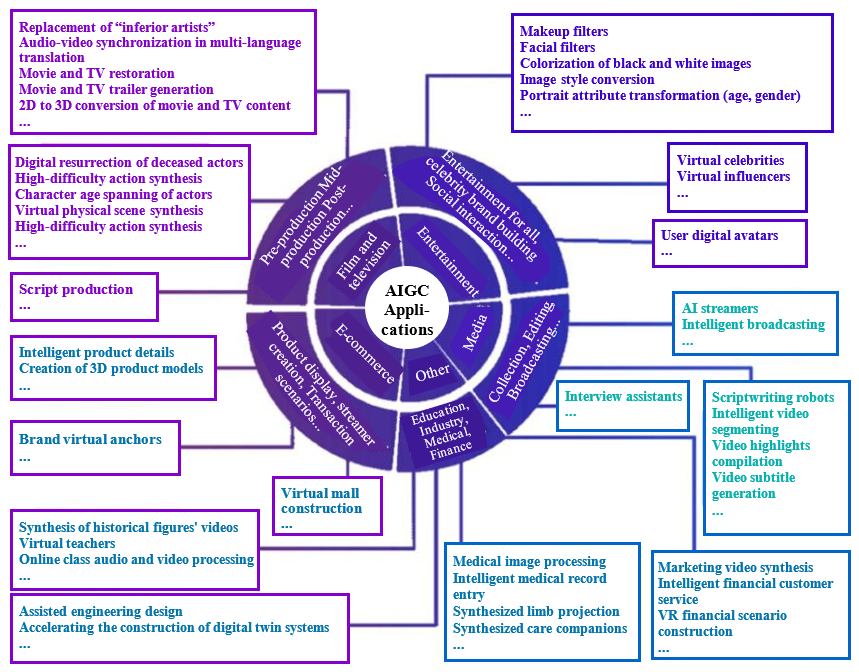

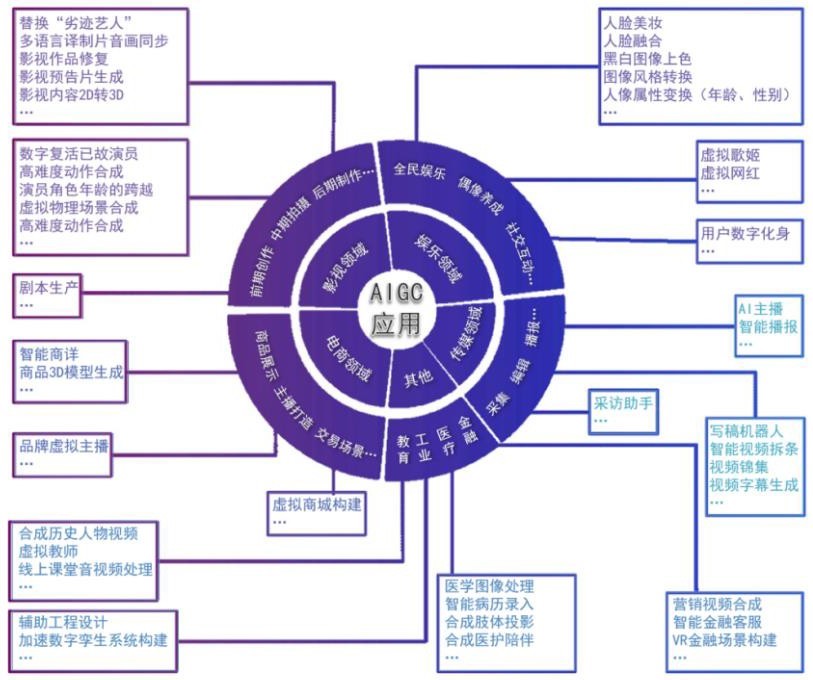

III. AIGC application scenarios

三、人工智能生成内容的应用场景

Against the backdrop of the protracted and recurring COVID-19 pandemic, demand for digital content in various industries has seen an upsurge, and there is an urgent need to narrow the gap between the consumption and supply of content in the digital world. With its verisimilitude, diversity, controllability, and ease of composition, AIGC is expected to help enterprises improve the efficiency of content production, as well as provide them with more richly diverse, dynamic, and interactive content, and it may help highly digitalized industries with abundant demand for content, such as media, e-commerce, film and television, and entertainment, achieve significant innovation-based development.

在全球新冠肺炎疫情延宕反复的背景下,各行业对于数字内容的需求呈现井喷态势,数字世界内容消耗与供给的缺口亟待弥合。AIGC以其真实性、多样性、可控性、组合性的特征,有望帮助企业提高内容生产的效率,以及为其提供更加丰富多元、动态且可交互的内容,或将率先在传媒、电商、影视、娱乐等数字化程度高、内容需求丰富的行业取得重大创新发展。

Figure 2. AIGC applications

图 2 AIGC应用视图

来源:中国信息通信研究院

来源:中国信息通信研究院(i) AIGC + media: Production based on human-computer collaboration is promoting media convergence

(一)AIGC+传媒:人机协同生产,推动媒体融合

With the accelerating increase of the global informatization level in recent years, the integrated development of AI and the mass media industry has continued to progress. AIGC is a new method of content production that comprehensively empowers content production for media. Applications such as writing bots, interview assistants, video subtitle production, audio-visual broadcasting, video compilation, and AI-synthesized hosts and anchors have appeared continuously, and have penetrated the whole process from collection and editing to broadcasting. They have profoundly changed how media content is generated, becoming an important force driving media convergence.

近年来,随着全球信息化水平的加速提升,人工智能与传媒业的融合发展不断升级。AIGC作为当前新型的内容生产方式,为媒体的内容生产全面赋能。写稿机器人、采访助手、视频字幕生成、语音播报、视频锦集、人工智能合成主播等相关应用不断涌现,并渗透到采集、编辑、传播等各个环节,深刻地改变了媒体的内容生产模式,成为推动媒体融合发展的重要力量。

Collection and editing: (1) The achievement of recorded voice transcription has improved the work experience of media workers. Transcribing recorded speech into text with the help of speech recognition technology effectively compresses the repetitive work of recording and organizing during the article production process, further assuring the timeliness of news. During the 2022 Winter Olympics, iFlytek’s smart voice recorder helped reporters quickly produce articles in two minutes through cross-language voice transcription. (2) The achievement of intelligent news writing has improved the timeliness of news information. Algorithm-based automatic news writing automates some of the labor-intensive gathering and editing work, helping media produce content faster, more accurately, and with greater intelligentization. For example, Quakebot, a robotic reporter of the Los Angeles Times website, wrote and published relevant news only three minutes after the earthquake in Los Angeles in March 2014. Wordsmith, an intelligent writing platform used by the Associated Press, can write 2,000 reports per second. The China Earthquake Network’s writing robot finished compiling and distributing news within seven seconds after the 2017 Jiuzhaigou earthquake. Yicai Media Group’s “DT Draft King” can write 1,680 words a minute.1 (3) The achievement of intelligent video editing has improved the value of video content. The use of intelligent video editing tools such as video subtitle generation, video compilation, video topic segmentation, and video super-resolution efficiently saves manpower and time costs and maximizes the value of copyrighted content. During the 2020 National People’s Congress, the People’s Daily used an “intelligent cloud video editor” to quickly generate videos, and was able to achieve technical operations such as automatic subtitle matching, real-time character tracking, fixing of picture flutter, and speedy switching between horizontal and vertical screen orientations, so as to meet the requirements of multi-platform distribution.2 During the 2022 Winter Olympics, CCTV Video used an “AI intelligent content production editing system” to efficiently produce and distribute video highlights of the Winter Olympics ice and snow events, creating more possibilities for the in-depth value development of copyrighted sports media content.

在采编环节,一是实现采访录音语音转写,提升传媒工作者的工作体验。借助语音识别技术将录音语音转写成文字,有效压缩稿件生产过程中录音整理方面的重复工作,进一步保障了新闻的时效性。2022年冬奥会期间,科大讯飞的智能录音笔通过跨语种的语音转写助力记者2分钟快速出稿。二是实现智能新闻写作,提升新闻资讯的时效。基于算法自动编写新闻,将部分劳动性的采编工作自动化,帮助媒体更快、更准、更智能化地生产内容。比如2014年3月,美国洛杉矶时报网站的机器人记者Quakebot,在洛杉矶地震发生后仅3分钟,就写出相关消息并进行发布;美联社使用的智能写稿平台Wordsmith可以每秒写2000篇报道;中国地震台网的写稿机器人在九寨沟地震发生后7秒内就完成了相关消息的编发;第一财经“DT稿王”一分钟可写出1680字。三是实现智能视频剪辑,提升视频内容的价值。通过使用视频字幕生成、视频锦集、视频拆条、视频超分等视频智能化剪辑工具,高效节省人力时间成本,最大化版权内容价值。2020年全国两会期间,人民日报社利用“智能云剪辑师”快速生成视频,并能够实现自动匹配字幕、人物实时追踪、画面抖动修复、横屏速转竖屏等技术操作,以适应多平台分发要求。2022年冬奥会期间,央视视频通过使用AI智能内容生产剪辑系统,高效生产与发布冬奥冰雪项目的视频集锦内容,为深度开发体育媒体版权内容价值,创造了更多的可能性。

In terms of dissemination, AIGC applications are concentrated mainly in areas such as news broadcasting, with AI-synthesized anchors as the core application. AI-synthesized anchors have created a precedent for real-time voice and character animation synthesis in the field of news. It is only necessary to enter the text content that needs to be broadcast. The computer will then generate the corresponding news video with an AI-synthesized anchor reporting, and ensure that the character in the video maintains naturally consistent sound, expressions, and lip movements, displaying the same effectiveness in conveying information as real anchors. Looking at the application of AI-synthesized anchors in the media area, three characteristics are apparent. (1) The scope of application is expanding. At present, Xinhua News Agency, CCTV, People’s Daily, and other national media, and Hunan TV and other provincial and municipal media, have begun to actively layout the application of AI-synthesized anchors, launching virtual news hosts including “Xin Xiaowei” and “Little C.” They have also pushed their application of the technology from news broadcasting to a wider range of scenarios such as special show hosting, reporting, weather forecasting, etc., and profoundly empowered the dissemination of major events such as the National People’s Congress, the Winter Olympics, and the Winter Paralympics. (2) Application scenarios are being upgraded. In addition to regular news broadcasting, a series of AI-synthesized anchors have begun supporting multilingual broadcasting and sign language broadcasting. During the 2020 National People’s Congress, a multilingual virtual anchor used Chinese, Korean, Japanese, English, and other languages for news reporting, realizing the broadcasting of one voice in multiple languages, delivering Chinese news to the world, and conforming to the trend of information sharing in the information age.3 During the 2022 Winter Olympics, Baidu, Tencent, and other enterprises launched broadcasting with sign language by “digital humans” to provide sign language commentary for millions of hearing-impaired users, further promoting the progress of barrier-free watching of the games. (3) Application forms are being perfected. In terms of imagery, there has been a gradual expansion from 2D to 3D; in terms of actuation, the range has begun to extend from the mouth to facial expressions, limbs, fingers, and background content material; and in terms of content construction, the direction is from supporting SaaS-based platform tool construction to exploring intelligentized production. For example, Tencent’s “Lingyu” 3D digital sign language interpreter achieves the generation of lip movements, facial expressions, body movements, finger movements, etc., and works with a visualized action editing platform which supports the fine-tuning of sign language actions.

在传播环节,AIGC应用主要集中于以AI合成主播为核心的新闻播报等领域。AI合成主播开创了新闻领域实时语音及人物动画合成的先河,只需要输入所需要播发的文本内容,计算机就会生成相应的AI合成主播播报的新闻视频,并确保视频中人物音频和表情、唇动保持自然一致,展现与真人主播无异的信息传达效果。纵观AI合成主播在传媒领域的应用,呈现三方面的特点。一是应用范围不断拓展。目前新华社、中央广播电视总台、人民日报社等国家级媒体及湖南卫视等省市媒体都开始积极布局应用AI合成主播,先后推出“新小微”、“小C”等虚拟新闻主持人,并推动其从新闻播报向晚会主持、记者报道、天气预报等更广泛的场景应用,为全国两会、冬奥会、冬残奥会等重大活动传播深度赋能。二是应用场景不断升级。除了常规的新闻播报,AI合成主播开始陆续支持多语种播报和手语播报。2020年全国两会期间,多语种虚拟主播采用中、韩、日、英等多种语言进行新闻报道,实现了一音多语的播报,将中国新闻传递给世界,顺应了信息化时代信息共享的发展潮流。2022年冬奥会期间,百度、腾讯等企业推出手语播报数字人,为千万听障用户提供手语解说,进一步推动观赛的无障碍进程。三是应用形态日趋完善。在形象方面,逐步从2D向3D拓展;在驱动范围上,开始从口型向面部表情、肢体、手指、背景内容素材延伸;在内容构建上,从支持SaaS化平台工具构建向智能化生产探索。例如腾讯3D手语数智人“聆语”,实现了唇动、面部表情、肢体动作、手指动作等内容的生成,并配套可视化动作编辑平台,支持对手语动作进行精修。

AIGC is having a profound impact on media organizations, media practitioners, and media audiences. For media organizations, incorporating AIGC into the news production process greatly improves production efficiency and brings new visual and interactive experiences. It enriches the forms of news reporting, accelerates the digital transformation of media, and promotes the transformation of media to smart media. For media practitioners, AIGC can help them produce more humanistic, socially significant, and economically valuable news. It can also automate part of the laborious work of news gathering, editing, and broadcasting, so that they can focus more on work that requires in-depth thinking and creativity, such as news features, in-depth reports, special reports, and other niche areas that have greater need for the strengths human beings have in the precise analysis of things, the proper handling of emotional elements, and other aspects. For media audiences, the application of AIGC enables them to obtain news content in richer and more diverse forms in a shorter period of time, which improves the timeliness and convenience of obtaining news information. It also lowers the technical barriers of the media industry, which prompts media audiences to have more opportunities to participate in the production of content, greatly enhancing their sense of participation.

AIGC对传媒机构、传媒从业者和传媒受众都产生深刻影响。对传媒机构来说,AIGC通过参与新闻产品的生产过程,大幅提高生产效率,并带来新的视觉化、互动化体验;丰富了新闻报道的形式,加速了媒体的数字化转型,推动传媒向智媒转变。对传媒从业者来说,AIGC可助力生产更具人文关怀、社会意义和经济价值的新闻作品;将部分劳动性的采编播工作自动化,让其更加专注于需要深入思考和创造力的工作内容,如新闻特稿、深度报道和专题报道等此类更需发挥人类在精准分析事物、妥善处理情感元素等方面优势的细分领域。对传媒受众来说,AIGC的应用可使其在更短时间内获得以更丰富多元的形态呈现的新闻内容,提高了其获取新闻信息的及时性和便捷性;降低了传媒行业的技术门槛,促使传媒受众具有更多参与内容生产的机会,极大增强其参与感。

(ii) AIGC + e-commerce: Promoting the blending of virtual and real, and creating an immersive experience

(二)AIGC+电商:推进虚实交融,营造沉浸体验

With the development and application of digital technology and the upgrading and acceleration of consumption, the field of e-commerce is developing in the direction of immersive shopping experience. AIGC is accelerating the construction of 3D merchandise models, virtual brand representatives, and even virtual showrooms, and through combination with new technologies like augmented reality (AR) and virtual reality (VR), an immersive shopping experience is achieved with audio-visual and other kinds of multi-sensory interaction.

随着数字技术的发展和应用、消费的升级和加快,购物体验沉浸化成为电商领域发展的方向。AIGC正加速商品3D模型、虚拟主播乃至虚拟货场的构建,通过和AR、VR等新技术的结合,实现视听等多感官交互的沉浸式购物体验。

Generation of 3D product models for product display and virtual trial is enhancing the online shopping experience. Based on images of products from different angles, 3D geometric models and textures of merchandise are automatically generated with the help of visual generation algorithms. Supplemented by online virtual “seeing and trying on,” they provide a differentiated online shopping experience close to the real thing, helping to efficiently boost user conversion. Baidu, Huawei, and other enterprises have launched automated 3D product modeling services, supporting the completion of 3D product photo shooting and generation in minutes, with millimeter-level accuracy. Unlike traditional 2D displays, 3D models can show the appearance of a product from all angles, which can significantly reduce the user’s product selection and communication time, enhance the user experience, and quickly facilitate merchandise transactions. At the same time, the 3D product models thus generated can also be used for trying on online, greatly restoring the experience of trying out goods or services, and allowing consumers more opportunities for exposure to the absolute value of products or services. An example is Alibaba’s 3D Tmall Home Furnishing City, which it put online in April 2021. By providing merchants with 3D design tools and AI-based 3D product model generation services, it helps them quickly build a 3D shopping space. It also supports consumers in doing their own home furnishing, providing them an immersive “cloud shopping” experience. Data show that the average conversion rate of 3D shopping is 70%, 9 times higher than the industry average. The unit price compared with normal guided transactions increased by more than 200%, while the rate of commodity returns and exchanges fell significantly. Many brands have also begun exploring and making attempts in the virtual trial direction. Examples include Uniqlo’s virtual fitting, Adidas’ virtual shoe try-on, Chow Tai Fook virtual jewelry try-on, Gucci virtual try-on for watches and glasses, Ikea’s virtual furniture matching, and Porsche’s virtual test drive.4 Although the traditional manual modeling method is still used, more consumer-level tools are expected to emerge in the future as AIGC technology continues to progress, thus gradually reducing the threshold and cost of 3D modeling and facilitating the large-scale commercialization of virtual try-on applications.

生成商品3D模型用于商品展示和虚拟试用,提升线上购物体验。基于不同角度的商品图像,借助视觉生成算法自动化生成商品的3D几何模型和纹理,辅以线上虚拟“看、试、穿、戴”,提供接近实物的差异化网购体验,助力高效提升用户转化。百度、华为等企业都推出商品自动化3D建模服务,支持在分钟级的时间内完成商品的3D拍摄和生成,精度可达到毫米级。相较于传统2D展示,3D模型可720°全方位展示商品主体外观,可大幅度降低用户选品和沟通时间,提升用户体验感,快速促成商品成交。同时生成出的3D商品模型还可用于在线试穿,高度还原商品或服务试用的体验感,让消费者有更多机会接触到产品或服务的绝对价值。如阿里于2021年4月上线3D版天猫家装城,通过为商家提供3D设计工具及商品3D模型AI生成服务,帮助商家快速构建3D购物空间,支持消费者自己动手做家装搭配,为消费者提供沉浸式的“云逛街”体验。数据显示,3D购物的转化率平均值为70%,较行业平均水平提升了9倍,同比正常引导成交客单价提升超200%,同时商品退换货率明显降低。此外,不少品牌企业也开始在虚拟试用方向上开展探索和尝试,如优衣库虚拟试衣、阿迪达斯虚拟试鞋、周大福虚拟试珠宝、Gucci虚拟试戴手表和眼镜、宜家虚拟家具搭配、保时捷虚拟试驾等。尽管目前还是采用的传统手动建模方式,但随着AIGC技术的不断进步,未来有望涌现更多消费级工具,从而逐步降低3D建模的门槛和成本,助力虚拟试穿应用大规模商用。

Creating virtual hosts and empowering interactive livestream marketing (“live shopping”) Creating virtual livestream hosts based on visual, voice, and text generation technology provides audiences 24-hour uninterrupted product recommendations and introductions, as well as online service capabilities, and for merchants it lowers the barriers to livestreaming. Compared to a livestream shopping “studio” with real people, virtual hosts have three major advantages: First, virtual hosts can fill the livestreaming gaps left by real hosts, so that the livestream can have non-stop rotation, both providing greater viewing time flexibility and a more convenient shopping experience for users, but also creating greater business growth for participating merchants. For example, the virtual hosts of brands like L’Oreal, Philips, and Perfect Diary generally go online at midnight and do nearly 9 hours of livestreaming, forming with human hosts a 24-hour seamless livestreaming service. Second, the virtualization of brand representatives can accelerate the store or brand rejuvenation process, narrow the distance to new consumer groups, and shape a store’s image for the metaverse era. In the future, it can be extended and applied to more diverse virtual scenarios in the metaverse to achieve multi-sphere dissemination. For example, the makeup brand Carslan launched its own brand virtual image and introduced it into its livestream as the daily virtual host and shopping guide of its Tmall flagship store. At the same time, traditional enterprises that already have a virtual brand IP image can directly utilize the existing image by quickly transforming it into a virtual brand representative. For example, during Haier’s livestream promotion activity in May 2020, the “Haier Brothers” virtual IP with which we are all familiar came to the livestream and interacted with the human host and fans, receiving ten million plays. Third, the persona of a virtual host is more stable and controllable. In situations where a leading brand representative is limited and could undergo a “public persona collapse,” a virtual representative’s persona, words, and deeds are controlled by the brand, so there is stronger controllability and security than with a real star. Brands do not have to worry about the persona of a virtual image collapsing, bringing them negative news, bad reviews, and financial losses.

打造虚拟主播,赋能直播带货。基于视觉、语音、文本生成技术,打造虚拟主播为观众提供24小时不间断的货品推荐介绍以及在线服务能力,为商户直播降低门槛。相比真人直播间带货,虚拟主播具备三大优势:一是虚拟主播能够填补真人主播的直播间隙,使直播间能不停轮播,既为用户提供更灵活的观看时间和更方便的购物体验,也为合作商家创造更大的生意增量。如欧莱雅、飞利浦、完美日记等品牌的虚拟主播一般会在凌晨0点上线,并进行近9个小时的直播,与真人主播形成了24小时无缝对接的直播服务。二是虚拟化的品牌主播更能加速店铺或品牌年轻化进程,拉近与新消费人群的距离,塑造元宇宙时代的店铺形象,未来可通过延展应用到元宇宙中更多元的虚拟场景,实现多圈层传播。如彩妆品牌“卡姿兰”推出自己的品牌虚拟形象,并将其引入直播间作为其天猫旗舰店日常的虚拟主播导购。同时对于已具备虚拟品牌IP形象的传统企业,可直接利用已有形象快速转化形成虚拟品牌主播。如在2020年5月海尔直播大促活动中,大家所熟知的海尔兄弟虚拟IP来到直播间,并同主持人和粉丝一起互动,高达千万播放量。三是虚拟主播人设更稳定可控。在头部主播有限并且可能“人设崩塌”的情况下,虚拟主播人设、言行等由品牌方掌握,比真人明星的可控性、安全性更强。品牌不必担心虚拟形象人设崩塌,为品牌带来负面新闻、差评及资金损失。

Empowering online malls and offline showrooms to accelerate their evolution and provide consumers new shopping scenarios. The rapid, low-cost, and high-volume construction of virtual showrooms can be achieved by reconstructing the 3D geometric structure of scenes from 2D images. For businesses, this will effectively reduce the barriers and costs of building 3D shopping spaces. For some industries that originally relied heavily on offline stores, it has opened up room for imagining online-offline fusion, while for consumers it is providing a new online-offline fusion consumer experience. Some brands have already begun to try to create virtual spaces. For example, during the 100th anniversary celebration of its brand, luxury goods merchant Gucci moved its offline Gucci Garden Archetypes exhibition to the Roblox online game platform, launching a two-week virtual exhibition with five themed exhibition halls whose contents corresponded to those of the real exhibition. In July 2021, Alibaba showcased its “Buy+” VR project for the first time, offering a 360° virtual shopping site open for shopping experience. In November 2021, Nike and Roblox partnered to launch the Nikeland virtual world open to all Roblox users. Following the successful application of image-based 3D reconstruction technology in Google Maps’ immersive view feature, the automated construction of virtual showrooms will be better applied and developed in the future.

赋能线上商城和线下秀场加速演变,为消费者提供全新的购物场景。通过从二维图像中重建场景的三维几何结构,实现虚拟货场快速、低成本、大批量的构建,将有效降低商家搭建3D购物空间的门槛及成本,为一些原本高度倚重线下门店的行业打开了线上线下融合的想象空间,同时为消费者提供线上线下融合的新消费体验。目前一些品牌已经开始尝试打造虚拟空间。例如奢侈品商Gucci在一百周年品牌庆典时,把线下的Gucci Garden Archetypes展览搬到了游戏Roblox上,推出了为期两周的虚拟展,5个主题展厅的内容与现实展览相互对应。2021年7月,阿里巴巴首次展示了其虚拟现实计划“Buy+”,并提供360°虚拟的购物现场开放购物体验。2021年11月,Nike和Roblox合作,推出虚拟世界Nikeland,并向所有Roblox用户开放。随着基于图像的3D重建技术在谷歌地图沉浸式视图功能中的成功应用,虚拟货场的自动化构建未来将得到更好的应用和发展。

(iii) AIGC + film and television: Expanding the creative space and enhancing the quality of creative works

(三)AIGC+影视:拓展创作空间,提升作品质量

With the rapid development of the film and TV industry, process problems, from the early creation phase and shooting to post-production, have also emerged. There are development pains, such as a relative lack of high-quality scripts, high production costs, and poor quality of some works, so structural upgrading is urgently needed. The use of AIGC technology can stimulate ideas for film and TV script creation, expand the space for film and TV character and scene creation, and greatly improve the quality of post-production for film and TV products, thereby helping to maximize the cultural and economic value of film and TV works.

随着影视行业的快速发展,从前期创作、中期拍摄到后期制作的过程性问题也随之显露,存在高质量剧本相对缺乏、制作成本高昂以及部分作品质量有待提升等发展痛点,亟待进行结构升级。运用AIGC技术能激发影视剧本创作思路,扩展影视角色和场景创作空间,极大地提升影视产品的后期制作质量,帮助实现影视作品的文化价值与经济价值最大化。

AIGC provides new ideas for script creation. By analyzing and summarizing massive script data and producing scripts quickly according to preset styles, with creators then doing the screening and secondary processing, creators are thereby inspired to broaden their creative thinking, and the creative cycle is shortened. Foreign countries have taken the lead in carrying out related attempts. As early as June 2016, New York University used AI to write the screenplay for the movie Sunspring, which, after filming and production, was shortlisted in the top ten in Sci-Fi London’s 48-Hour Challenge competition.5 In 2020, students at Chapman University in the United States used OpenAI’s GPT-3 large language model to create a screenplay and produced the short film Solicitors. Some domestic vertical technology companies have begun to provide services related to intelligent script production, such as Haima Qingfan’s “novel to screenplay” intelligent writing function, which has been of service in more than 30,000 episode scripts, more than 8,000 in-theater or made-for-streaming movie screenplays, including hits like Hi, Mom and The Wandering Earth, and over five million online novels.

AIGC为剧本创作提供新思路。通过对海量剧本数据进行分析归纳,并按照预设风格快速生产剧本,创作者再进行筛选和二次加工,以此激发创作者的灵感,开阔创作思路,缩短创作周期。国外率先开展相关尝试,早在2016年6月,纽约大学利用人工智能编写的电影剧本《Sunspring》,经拍摄制作后入围伦敦科幻电影(Sci-Fi London)48小时挑战前十强。2020年,美国查普曼大学的学生利用OpenAI的大模型GPT-3创作剧本并制作短片《律师》。国内部分垂直领域的科技公司开始提供智能剧本生产相关的服务,如海马轻帆推出的“小说转剧本”智能写作功能,服务了包括《你好,李焕英》《流浪地球》等爆款作品在内的剧集剧本30000多集、电影/网络电影剧本8000多部、网络小说超过500万部。

AIGC expands the space for character and scene creation. First, through AI synthesis of faces, voices, and other related content, it is possible to realize “digital resurrection” of deceased actors, replacement of “bad actors,” synchronization of audio and video in multi-language translations, age-spanning of actors’ roles, and synthesis of difficult action to reduce the impact of actors’ limitations on film and TV productions. For example, in the CCTV documentary China Reinvents Itself, CCTV and Tech iFlytek used AI algorithms to learn the voice data of the late dubbing artist Li Yi’s past documentaries, and synthesized the dubbing according to the documentary’s script. Coupled with post-processing editing and optimization, this ultimately allowed Li Yi’s voice to be reproduced. During the 2020 broadcast of the TV show Healer of Children, an academic scandal of the actor playing the main character adversely affected publicity and distribution. Intelligent video face-switching technology was then used to replace the main actor, thus reducing the losses of the program in the process of creation. In 2021, the British company Flawless launched the visualization tool TrueSync to address the problem of unsynchronized lip shapes of characters in multilingual translations. It can accurately adjust the facial features of actors through AI-based in-depth video synthesis technology to make the actors’ lip shapes match dubbing or subtitles in different languages. Second, virtual physical scenes are being synthesized through AI to generate scenes that are impossible or too costly to film in real life, greatly broadening the boundaries of the imagination for film and TV works and giving audiences better visual effects and auditory experiences. In the 2017 hit Detective Samoyeds, for example, a large number of scenes in the drama were virtually generated through AI technology. Workers collected a large amount of scene information early on, and special effects personnel performed digital modeling to produce simulated shooting scenes, while the actors performed in a green screen studio. By combining real-time matting technology, the actor’s movements were fused with the virtual scenes to finally generate the footage.6

AIGC扩展角色和场景创作空间。一是通过人工智能合成人脸、声音等相关内容,实现“数字复活”已故演员、替换“劣迹艺人”、多语言译制片音画同步、演员角色年龄的跨越、高难度动作合成等,减少由于演员自身局限对影视作品的影响。如央视纪录片《创新中国》中,央视和科大讯飞利用人工智能算法学习已故配音员李易过往纪录片的声音资料,并根据纪录片的文稿合成配音,配合后期的剪辑优化,最终让李易的声音重现。在2020年播出的《了不起的儿科医生》中,主角人物的学历事件影响了影视作品的宣传与发行,该作品便采用了智能影视换脸技术将主角人物进行替换,从而减少影视作品创作过程中的损失。2021年,英国公司Flawless针对多语言译制片中角色唇形不同步的问题推出了可视化工具TrueSync,能通过AI深度视频合成技术精准调整演员的面部特征,让演员的口型和不同语种的配音或字幕相匹配。二是通过人工智能合成虚拟物理场景,将无法实拍或成本过高的场景生成出来,大大拓宽了影视作品想象力的边界,给观众带来更优质的视觉效果和听觉体验。如2017年热播的《热血长安》,剧中的大量场景便是通过人工智能技术虚拟生成。工作人员在前期进行大量的场景资料采集,经由特效人员进行数字建模,制作出仿真的拍摄场景,演员则在绿幕影棚进行表演,结合实时抠像技术,将演员动作与虚拟场景进行融合,最终生成视频。

AIGC empowers film and TV editing and boosts post-production. First, it allows the repair and restoration of film and TV images and improves the clarity of image materials, assuring the picture quality of film and TV works. For example, the China Film Digital Production Base and the University of Science and Technology of China jointly developed the “China Film Shensi” AI image processing system, which has successfully restored many film and TV productions such as Amazing China and Street Angel. With the AI Shensi system, the time it takes to restore a movie can be shortened by three-quarters and the cost can be cut in half. Meanwhile, streaming media platforms such as iQIYI, Youku, and Xigua Video have begun to develop AI-based restoration of classic movies and television shows as a new growth area. Second, it achieves film and TV trailer generation. After learning audiovisual techniques from hundreds of thriller trailers, Watson, an AI system under the IBM banner, produced a 6-minute trailer by picking movie scenes from the 90-minute film Morgan that meet the characteristics of a thriller trailer. Although the trailer needed to be reworked by production staff before it was finalized, it shrank the trailer production cycle from about a month to 24 hours. Third, it achieves the automatic conversion of film and TV content from 2D to 3D. The AI-backed automatic 3D content production platform “Zhengrong” launched by DreamWld Tech supports the dimensional conversion of film and TV works, and improves the efficiency of theater-level 3D conversion more than a thousand fold.

AIGC赋能影视剪辑,升级后期制作。一是实现对影视图像进行修复、还原,提升影像资料的清晰度,保障影视作品的画面质量。例如中影数字制作基地和中国科技大学共同研发的基于AI的图像处理系统“中影·神思”,成功修复《厉害了,我的国》《马路天使》等多部影视剧。利用AI神思系统,修复一部电影的时间可以缩短四分之三,成本可以减少一半。同时,爱奇艺、优酷、西瓜视频等流媒体平台都开始将AI修复经典影视作品作为新的增长领域开拓。二是实现影视预告片生成。IBM旗下的人工智能系统Watson在学习了上百部惊悚预告片的视听手法后,从90分钟的《Morgan》影片中挑选出符合惊悚预告片特点的电影镜头,并制作出一段6分钟的预告片。尽管这部预告片需要在制作人员的重新修改下才能最终完成,但却将预告片的制作周期从一个月左右缩减到24小时。三是实现将影视内容从2D向3D自动转制。聚力维度推出的人工智能3D内容自动制作平台“峥嵘”支持对影视作品进行维度转换,将院线级3D转制效率提升1000多倍。

(iv) AIGC + entertainment: Expanding boundaries and gaining development momentum

(四)AIGC+娱乐:扩展辐射边界,获得发展动能

In the digital economy era, entertainment not only brings consumers closer to products and services, but also indirectly satisfies modern people’s desire for a sense of belonging, which is becoming increasingly important. With the help of AIGC technology, through the generation of interesting images, audio, and video, the creation of virtual idols, and the development of digital avatars for consumers, the entertainment industry can rapidly expand its own boundaries radially in ways that are more readily accepted by consumers, thereby gaining new growth momentum.

在数字经济时代,娱乐不仅拉近了产品服务与消费者之间的距离,而且间接满足了现代人对归属感的渴望,重要性与日俱增。借助于AIGC技术,通过趣味性图像或音视频生成、打造虚拟偶像、开发C端用户数字化身等方式,娱乐行业可以迅速扩展自身的辐射边界,以更加容易被消费者所接纳的方式,获得新的发展动能。

Generating interesting images, audio, and video to stimulate user participation and enthusiasm. In terms of image and video generation, AIGC applications represented by AI-based face swapping greatly satisfy users’ need for novelty, and become tools for breaking out of the pack. For example, the image video synthesis applications FaceAPP, ZAO, and Avatarify, once launched, immediately went viral to trigger a craze, topping the App Store free download list; an interactive app for generating portraits using 56 ethnic group clothing photos, launched by the People’s Daily New Media Center for the 70th anniversary of the National Day, promptly swept through users’ networks, with more than 738 million photographs synthesized; in March 2020, Tencent launched an activity for taking pictures with (Chinese idol girl group) Rocket Girls 101 within the Game for Peace avatar-driven game. Such interactive content has greatly stimulated user’s emotions and brought about rapid breakthroughs in social communication. In terms of voice synthesis, voice modification increases interactive entertainment. For example, QQ and many other social media software, as well as Game for Peace and many other games, have integrated voice modification functions, allowing users to experience a variety of different voices, such as “big uncle,” “cute girl,” etc., making communication a joyful game.

实现趣味性图像或音视频生成,激发用户参与热情。在图像视频生成方面,以AI换脸为代表的AIGC应用极大满足用户猎奇的需求,成为破圈利器。例如FaceAPP、ZAO、Avatarify等图像视频合成应用一经推出,就立刻病毒式在网络上引发热潮,登上AppStore免费下载榜首位;人民日报新媒体中心在国庆70周年推出互动生成56个民族照片人像的应用刷屏朋友圈,合成照片总数超7.38亿张;2020年3月,腾讯推出化身游戏中的“和平精英”与火箭少女101同框合影的活动,这些互动的内容极大地激发出了用户的情感,带来了社交传播的迅速破圈。在语音合成方面,变声增加互动娱乐性。如QQ等多款社交软件、和平精英等多款游戏均已集成变声功能,支持用户体验大叔、萝莉等多种不同声线,让沟通成为一种乐此不疲的游戏。

Creating virtual idols and releasing IP value. First, it allows the co-creation of synthesized songs with users, so as to continuously deepen the adhesion of fans. “Virtual singers,” represented by Hatsune Miku and Luo Tianyi, are virtual characters created based on the VOCALOID voice synthesis engine software. Real people provide the sound source, and then a voice is synthesized by the software, allowing fans to participate in-depth in the co-creation of virtual singers. Take Luo Tianyi as an example: Anyone who creates lyrics and music through the voice library can achieve the effect of “Luo Tianyi singing a song.” In the ten years since Luo Tianyi’s debut on July 12, 2012, musicians and fans have created more than 10,000 works for Luo Tianyi, providing users with more room for imagination and creativity while establishing deeper connections with fans. Second, AI-synthesized audio and video animation supports virtual idol-based content monetization in more diverse scenarios. With the growing maturity of audio and video synthesis, holographic projection, AR, VR, and other technologies, scenarios for monetizing virtual idols have gradually diversified. Virtual idols can now be monetized through concerts, music albums, advertisement endorsements, live broadcasting, and derivative products. At the same time, as the commercial value of virtual idols continues to be revealed, brands are increasingly willing to link with virtual IP. For example, “Ling Ling [翎 Ling], an internet celebrity created jointly by Xmov Ai and Next Generation, debuted in May 2020 and has now cooperated with VOGUE, Tesla, GUCCI, and other brands.

打造虚拟偶像,释放IP价值。一是实现与用户共创合成歌曲,不断加深粉丝黏性。以初音未来和洛天依为代表的“虚拟歌姬”,都是基于VOCALOID语音合成引擎软件为基础创造出来的虚拟人物,由真人提供声源,再由软件合成人声,都是能够让粉丝深度参与共创的虚拟歌手。以洛天依为例,任何人通过声库创作词曲,都能达到“洛天依演唱一首歌”的效果。从2012年7月12日洛天依出道至今十年的时间内,音乐人以及粉丝已为洛天依创作了超过一万首作品,通过为用户提供更多想象和创作空间的同时,与粉丝建立了更深刻联系。二是通过AI合成音视频动画,支撑虚拟偶像在更多元的场景进行内容变现。随着音视频合成、全息投影、AR、VR等技术的成熟,虚拟偶像变现场景逐步多元化,目前可通过演唱会、音乐专辑、广告代言、直播、周边衍生产品等方式进行变现。同时随着虚拟偶像商业价值被不断发掘,品牌方与虚拟IP的联动意愿随之提升。如由魔珐科技与次世文化共同打造的网红翎Ling于2020年5月出道至现在已先后与VOGUE、特斯拉、GUCCI等品牌展开合作。

Developing consumer-end user avatars and laying out the consumer metaverse. Since the release of Animoji on Apple cell phones in 2017, the iteration of avatar technology has developed from a single cartoon animal avatar to AI-automated generation of cartoon images of real people, so that users have more creative autonomy and a more lifelike image library. Major technology giants are actively exploring avatar-related applications, accelerating the layout of a future with a grand fusion of the “virtual digital world” and the real world. For example, at the 2020 World Internet Conference, Baidu demonstrated the ability to design dynamic virtual characters based on 3D virtual image generation, virtual image actuation and other AI technologies. You only need to take a photo to quickly generate a virtual image that can mimic the expressions and movement of “you” in a few seconds. In the developer exhibition area of the 2021 Apsara Conference, Alibaba Cloud demonstrated its latest technology—the Cartoon Smart Drawing project. It became a conference hit, attracting nearly 2,000 people to come experience it. Alibaba Cloud’s Cartoon Smart Drawing adopts a hidden variable mapping technology solution. With pictures of a person’s face as input, it can automatically generate virtual images with personal characteristics and discover their distinctive features, such as eye size and nose shape, while also tracking the user’s facial expressions to generate real-time animation, giving ordinary people the opportunity to create their very own cartoon images. In the foreseeable future, avatars serving as the user’s personal identity in the virtual world and vehicle for interaction will be further integrated with people’s productive lives and lifestyles, and will lead to the development of a virtual goods economy.

开发C端用户数字化身,布局消费元宇宙。自2017年苹果手机发布Animoji以来,“数字化身”技术迭代经历了由单一卡通动物头像,向AI自动生成拟真人卡通形象的发展,用户拥有更多创作的自主权和更生动的形象库。各大科技巨头均在积极探索“数字化身”相关应用,加速布局“虚拟数字世界”与现实世界大融合的“未来”。例如百度在2020年世界互联网大会上展现了基于3D虚拟形象生成和虚拟形象驱动等AI技术设计动态虚拟人物的能力。在现场只需拍摄一张照片,就能在几秒内快速生成一个可以模仿“我”的表情、动作的虚拟形象。在2021年的云栖大会开发者展区,阿里云展示了最新技术——卡通智绘项目,吸引了近2000名体验者,成为了大会爆款。阿里云卡通智绘采用了隐变量映射的技术方案,对输入人脸图片,发掘其显著特征如眼睛大小、鼻型等,可以自动化生成具有个人特色的虚拟形象,同时还可跟踪用户的面部表情生成实时动画,让普通人也能有机会创造属于自己的卡通形象。在可预见的未来,作为用户在虚拟世界中个人身份和交互载体的“数字化身”,将进一步与人们的生产生活相融合,并将带动虚拟商品经济的发展。

(v) AIGC + other: Promoting digital-real integration and accelerating industrial upgrading

(五)AIGC+其他:推进数实融合,加快产业升级

In addition to the above industries, AIGC applications are also developing rapidly in other industries such as education, finance, healthcare, and manufacturing. In the education field, AIGC is breathing new life into educational materials. Compared with traditional methods such as reading and lectures, AIGC provides educators with new tools to deliver knowledge to students in more vivid and convincing ways by making originally abstract and flat textbooks concrete and three-dimensional. For example, videos can be made of historical figures talking directly to students, injecting new vitality into an unappealing lecture; realistic virtual teachers can be synthesized to make digital teaching more interactive and interesting, and so on. In the financial field, AIGC is helping to achieve cost reductions and efficiencies. On one hand, through AIGC, automated production of financial information and product introduction video content can be achieved to improve the efficiency of financial institutions’ content operations. On the other hand, AIGC can be used to shape dual-channel (audio and video) customer service with virtual digital humans, bringing greater warmth to financial services. In the medical field, AIGC is empowering the whole diagnosis and treatment process. AIGC can be used in assisted diagnosis to improve the quality of medical images, enter electronic medical records, etc., completing the liberation of doctors’ intelligence and energy so that their resources can be focused on the core business, thereby improving the business ability of doctor groups. In terms of rehabilitation therapy, AIGC can synthesize speech audio for people who have lost their voices, synthesize limb projections for people with disabilities, and synthesize non-aggressive medical accompaniment for patients with mental illnesses. It can comfort patients in a humanized ways, thereby soothing their emotions and accelerating their recovery. In the manufacturing field, AIGC is increasing industrial efficiency and value. First, incorporating computer-aided design (CAD) greatly shortens the engineering design cycle. By automating repetitive, time-consuming, and low-level tasks in engineering design, AIGC can shrink to a matter of minutes engineering design tasks that would otherwise take thousands of hours. It also supports the generation of derived designs to provide inspiration for engineers and designers. In addition, it supports the introduction of changes in designs to achieve dynamic simulation. For example, for its BMW VISION NEXT 100 concept car, BMW used AIGC-assisted design to develop the car’s interior and its dynamic and functional exterior skin. Second, it accelerates the construction of digital twin systems. By quickly transforming digital geometries formed based on physical environments into real-time parametric 3D modeling data, digital twins of real-world factories, industrial equipment, production lines, etc. can be created efficiently. Overall, AIGC is developing into a horizontal combination deeply integrated with various other industries, and its applications are accelerating their penetration into all aspects of the economy and society.

除以上行业之外,教育、金融、医疗、工业等各行各业的AIGC应用也都在快速发展。教育领域,AIGC赋予教育材料新活力。相对于阅读和讲座等传统方式,AIGC为教育工作者提供了新的工具,使原本抽象、平面的课本具体化、立体化,以更加生动、更加令人信服的方式向学生传递知识。例如制作历史人物直接与学生对话的视频,给一场毫无吸引力的演讲注入新的活力;合成逼真的虚拟教师,让数字教学更具互动性和趣味性等。金融领域,AIGC助力实现降本增效。一方面可通过AIGC实现金融资讯、产品介绍视频内容的自动化生产,提升金融机构内容运营的效率;另一方面,可通过AIGC塑造视听双通道的虚拟数字人客服,让金融服务更有温度。医疗领域,AIGC赋能诊疗全过程。在辅助诊断方面,AIGC可用于改善医学图像质量、录入电子病历等,完成对医生的智力、精力的解放,让医生资源专注到核心业务中,从而实现医生群体业务能力的提升。在康复治疗方面,AIGC可以为失声者合成语言音频,为残疾者合成肢体投影,为心理疾病患者合成无攻击感的医护陪伴等,通过用人性化的方式来抚慰患者,从而舒缓其情绪,加速其康复。工业领域,AIGC提升产业效率和价值。一是融入计算机辅助设计CAD(Computer-aided Design),极大缩短工程设计周期。AIGC通过将工程设计中重复的、耗时的和低层次的任务自动化,可使原来需要耗费数千小时的工程设计缩短到分钟级。同时支持生成衍生设计,为工程师或设计师提供灵感。此外,还支持在设计中引入变化,实现动态模拟。如宝马公司在其BMW VISION NEXT 100概念车中通过AIGC辅助设计开发了汽车动态功能性外表皮和内饰。二是加速数字孪生系统的构建。通过将基于物理环境形成的数字几何图形,快速转化为实时参数化的3D建模数据,高效创建现实世界中工厂、工业设备和生产线等的数字孪生系统。总体来看,AIGC正在发展成与其他各类产业深度融合的横向结合体,其相关应用正加速渗透到经济社会的方方面面。

IV. Problems facing AIGC’s development

四、人工智能生成内容发展面临的问题

Now that AI technology development has entered the fast lane, AIGC is playing important roles in all aspects of social production and life because of its rapid response capability, lively knowledge output, and abundant application scenarios. At the same time, however, AIGC’s key technologies, the core capabilities of enterprises, and relevant laws and regulations have not yet been perfected, and disputes around fairness, responsibility, and safety are proliferating, triggering a series of problems in urgent need of solution.

随着人工智能技术发展步入快车道,AIGC因为其快速的反应能力、生动的知识输出、丰富的应用场景,在社会生产和生活的方方面面发挥着重要的作用。但与此同时,AIGC的关键技术、企业核心能力和相关法律法规尚未完善,围绕公平、责任、安全的争议日益增多,引发了一系列亟待解决的问题。

Key technologies are not fully mature enough, and there are still problem areas and difficulties in large-scale promotion and implementation. At present, AIGC technology is constantly being upgraded to further release the productivity of content, but key AI technologies still have limitations that impede the industry development process. First, AI algorithms have inherent flaws. AI algorithms have yet to overcome technical limitations in terms of transparency, robustness, bias, and discrimination, leading to numerous problems in algorithm application. Transparency: Due to the black-box operation mechanisms of algorithm models, their operation rules and causal logic are not obvious to developers. This characteristic makes the generation mechanisms of AI algorithms difficult for humans to understand and interpret, and once an algorithm makes an error, the lack of transparency will undoubtedly hinder external observers from correcting and removing errors. Robustness: Algorithm operation is prone to interference from data, models, training methods, and other factors, giving rise to non-robustness. For example, when the amount of training data is insufficient, an algorithm that has been tested with good performance on a specific dataset is likely to be affected by slight perturbations from small amounts of random noise, thus causing the model to give incorrect conclusions after the algorithm is put into application. When content is updated with online data, the algorithm is likely to produce deviations in the performance of the system, which may lead to system failure. Bias and discrimination: Algorithms use data as raw material. If data used initially has biases, those biases may persist over time, invisibly affect the results of AI algorithm operation, and ultimately lead to bias or discrimination in the content generated by algorithms, triggering user disputes about the fairness of the algorithms. Second, AIGC content editing and creation technology is imperfect. AI-enabled content editing and creation technology is still constrained by shortcomings, resulting in technical barriers to industry development. Text generation: Enterprises have bottlenecks in natural language understanding technology. Very often, templates are simplistically applied to generate mechanistic filler, resulting in similar and monotonous text structures. Moreover, it is difficult for text generation to truly produce emotional, anthropomorphic expression, departing from users’ expectations that text synthesis products be easy-to-read and of high-quality. Speech synthesis: Problems such as lack of fluency in speech expression and strongly mechanical-seeming voices are conspicuous. Emotional embedding in speech requires large-scale data volumes to support training, and the modeling requirements are very high, which increases the complexity of use and also makes it difficult to control the corresponding costs, restricting enterprises from unlocking the technology’s value. Visual generation: There are problems such as where intelligent image processing results are less than ideal and real-time motion capture is insufficiently accurate. In applications, due to the inability of large vision models to complete multiple visual perception tasks simultaneously, the accuracy, reduction, and simulation of machine vision are imperfect, so manual labeling is required at a later stage. Thus, the problems of high technical barriers and low production efficiency have not been well solved.

关键技术不够完全成熟,大规模推广落地尚存痛点、难点。目前,AIGC技术不断升级,进一步释放内容生产力,但其在人工智能关键技术方面尚存在局限,掣肘产业发展进程。一是人工智能算法存在固有缺陷。人工智能算法在透明度、鲁棒性、偏见与歧视方面存在尚未克服的技术局限,导致算法应用问题重重。在透明度方面,由于算法模型的黑箱运作机制,其运行规律和因果逻辑并不会显而易见的摆在研发者面前。这一特性使人工智能算法的生成机理不易被人类理解和解释,一旦算法出现错误,透明度不足无疑将阻碍外部观察者的纠偏除误。在鲁棒性方面,算法运行容易受到数据、模型、训练方法等因素干扰,出现非鲁棒特征。例如,当训练数据量不足的情况下,在特定数据集上测试性能良好的算法很可能被少量随机噪声的轻微扰动影响,从而导致模型给出错误的结论;在算法投入应用之后,随着在线数据内容的更新,算法很可能会产生系统性能上的偏差,进而引发系统的失灵。在偏见与歧视方面,算法以数据为原料,如果初始使用的是有偏见的数据,这些偏见可能会随着时间流逝一直存在,无形中影响着算法运行结果,最终导致AI算法生成的内容存在偏见或歧视,引发用户对于算法的公平性争议。二是AIGC内容编辑与创作技术不够完善。人工智能技术加持的内容编辑与创作技术仍然受短板制约,导致产业发展存在技术门槛。文本生成方面,企业在自然语言理解技术上存在瓶颈,往往只简单地套用模板生成机械化的填充,导致文本结构雷同、千篇一律,而且难以真正产出感性的、拟人的表达,背离用户对于文本合成产品的易读化、优质化期待。语音合成方面,语音表达不够流畅、声音机械感较强等问题突出。语音的情感嵌入需要大规模的数据量支持训练,并且对于建模的要求非常高,由此导致使用复杂度提升,也使得相应的成本难以控制,制约企业释放技术价值。视觉生成方面,存在智能图像的处理效果不够理想,实时动作捕捉精准度不足等问题。在应用中,由于视觉大模型同时完成多种视觉感知任务的能力不足,机器视觉的精准度、还原度、仿真度不能周全,需要后期人工标注,因而技术门槛高、制作效率低的问题没有得到很好解决。

The core capabilities of enterprises are uneven, threatening the healthy and secure development of the web content ecosystem. With the open-sourcing and openness of digital technology, AIGC technology R&D barriers and production costs have been continuously lowered, resulting in a mixed bag of platform enterprises in the market, and the dearth of core competencies among enterprises has caused serious obstacles to the construction of a good network ecosystem. First, content moderation ability needs to be improved. In recent years, AIGC enterprises, as entities with primary responsibility for internet content governance, have implemented their responsibility by establishing content moderation mechanisms, and “machine moderation + human moderation” has become their basic moderation method. In terms of machine moderation, the moderation accuracy rate is affected by the type of moderation, the multiplicity of content violation variants, and the intensification of countermeasure efforts by illegal and gray-area industries, resulting in a high rate of false alarms and the need for overlaid manual moderation. As for human moderation, the use of human moderation outsourcing services has become the mainstream in the market, but the performance of different human moderation teams varies with respect to personnel management, business process management, moderation capabilities, etc., and the industry has not formed a unified standard. Overall, the lack of qualified moderators may lead to an outpouring of illegal and illicit content containing fake and undesirable information, seriously harming the industry and even the entire network ecosystem. Second, enterprises need to further build their technology management ability. Because AIGC technology is becoming more and more complex, and its application in enterprises is often highly dynamic, enterprises need to have corresponding technology management capabilities for them to serve as technology designers and service providers. However, enterprises are commercial in nature, and where resources are limited, this often means they will tend to satisfy their own interests first and invest insufficiently in technological security and institutional safeguards. In this respect, the gaps between enterprises are very obvious. Enterprise with “deep pockets” and long development histories are more likely to have better levels of technological protection and management, and vice versa. Many small enterprises entering the market will put AIGC into application before their technology management ability is up to standard, providing a hotbed for plagiarism, infringement, content faking, malicious marketing, and other illegal and gray-area industry chains. Third, enterprises have yet to perfect their risk governance capacity. The “Guiding Opinions on Strengthening the Comprehensive Governance of Network Information Service Algorithms” clearly proposes strengthening the requirement for enterprises to shoulder primary responsibility. Enterprises should build and perfect AI management capacity and effectively prevent all risks in the process of AI development. However, the current AIGC technology is still in the early stages of development, and its risks are characterized by unknowns and complexity. Many enterprises have not yet perfected their risk prediction, prevention, and emergency response abilities, and the risk management concept has not been implemented in engineering and technology practices. This problem makes it likely for enterprises to miss opportunities to nip risks in the bud. When you are in a passive state in the complex network security game, once internal threats or external attacks are suffered, they can very easily trigger security risks to the network information content ecosystem.

企业核心能力参差不齐,威胁网络内容生态健康安全发展。随着数字技术的开源开放,AIGC技术研发门槛、制作成本等不断降低,致使市场上的平台企业泥沙俱下,企业核心能力不足对良好网络生态构建造成严重障碍。一是内容审核能力有待提升。近年来,各AIGC企业通过建立内容审核机制的方式落实互联网内容治理主体责任,“机审+人审”已成为其基本审核方式。在机审方面,审核准确率受审核类型、内容违规变种繁杂、网络黑灰产对抗手段加剧等影响而导致误报率偏高,需要人工叠加审核。在人审方面,使用人审外包服务已经成为市场主流,但不同的人审团队在人员管理、业务流程管理、审核能力等方面表现各异,行业内也未形成统一的标准。总体而言,缺乏合格的审核人员可能会导致包含虚假、不良信息的违法违规内容流出,严重影响产业甚至整个网络生态环境。二是企业技术管理能力建设不足。由于AIGC技术愈发复杂,且在企业中的运用往往具有高动态性等特点,要求企业作为技术设计者和服务提供者具备相应的技术管理能力。然而,企业具有商业属性,这就决定了在资源有限的情况下其往往倾向于首先满足自身利益,而对技术安全和制度保障投入不足。在这方面,各企业的差距十分明显。投资积累“家底”厚、发展时间长的企业,就更有可能技术防护和管理水平较好,反之不然。诸多初入市场的小型企业在技术管理能力不达标的情况将AIGC投入应用,为抄袭侵权、内容造假、恶意营销等灰黑产业链提供温床。三是企业风险治理能力尚未完善。《关于加强互联网信息服务算法综合治理的指导意见》明确提出强化企业主体责任。企业应构建完善的人工智能管理能力,切实防范人工智能发展过程中的各项风险。但是,当前AIGC技术仍处于发展初期,其风险具有未知性和复杂性等特点,很多企业对于对风险的预测、防范和应急处置能力均尚未完善,风险治理理念也未落实到工程技术实践中。这一问题导致企业很可能错失把风险拦截在萌芽状态的机会,在复杂的网络安全博弈中处于被动,一旦遭受内部威胁或外部攻击,极易引发网络信息内容生态安全风险。

The relevant rules and guidelines still need to be improved, and there is a mismatch problem between development and governance. AI industry rules and guidelines have been launched continually in recent years, and the governance system has begun to take shape, but as the progress of science and technology accelerates, institutional construction may not always keep up with it. This in turn gives rise to a mismatch problem between the development of technological innovation on one hand and policy support and legal regulation on the other. First, policies to support the industry’s development need to be implemented. China’s 14th Five-Year Plan, issued in March 2021, put forward the policy of “forging new digital economy advantages” and emphasized the important value of AI and other emerging digital industries in improving national competitiveness. Guided by this planning, and faced with the rapid development of AIGC-related industries, especially digital cultural industries, the central government has issued a number of policies to promote the development of new digital cultural industries. The latest “Opinions on Promoting the Implementation of the National Cultural Digitization Strategy,” issued in May 2022, calls for the study and formulation of industrial policies to support cultural digitization, emphasizing that localities should formulate specific implementation plans according to local conditions, and that relevant agencies should refine their policies and measures. In the future, the strength of support, promotion of implementation, and dynamic adjustment of policies of different localities and agencies will determine the degree of mutual construction between technology and society, which will play an important role in the development of AIGC technology in social contexts. Secondly, the ability to copyright AIGC has yet to be clarified. Currently, China’s Copyright Law stipulates that the objects of copyright are “works.” Just looking at the legal text, China’s current intellectual property law system stipulates that a subject of law is a person who enjoys rights, has obligations, and bears responsibilities, so it is difficult for non-human-produced intelligentization content to obtain copyright protection through the logic of “work-creation-author.”7 This view was supported in a 2019 judgment by the Beijing Internet Court. However, in the 2020 case of Tencent v. WDZJ.com, the Shenzhen Nanshan District Court held that an article written by AI is a copyright-protected work if it meets the requirement of originality. Ambiguity in the legal concepts triggered the reversal of judicial decisions, leading to the real-life dilemma of unclear copyright attribution for AIGC works. This problem may not only lead to an inability to obtain copyright protection for works created using AIGC technology, keeping AI technology from realizing its creative value. Due to the massive copying behavior of AI, it may also dilute the originality of rights holders of existing works, threatening the legitimate rights and interests of others. Third, the new technology makes supervision more difficult. In recent years, with the continuous maturation of AI technology, the content generated by computers after deep learning has become increasingly realistic, achieving the effect of “confusing fake and real.” By the same token, the application threshold is also decreasing. Everyone can easily “swap faces,” “modify voices,” and even join an “internet troll army.” Because of the universal “seeing is believing” cognitive trait among the public, when the technology is misused, it is likely that fake content will reach users through the internet instantly and in highly credible ways, causing the public’s judgment to fail in the game of ideas, given the difficulty of identifying trolls and false information. This in turn involves another real problem. That is, due to the virtual identity cloak provided by the internet and the development of related technologies, the producers of fake content are decentralized, mobile, large in number, and hidden, which makes tracking them an ever more difficult and complex task. Coupled with the vagueness and lagging nature of rules and guidelines, defining the boundaries for borderline counterfeiting behaviors poses a real dilemma, and this undoubtedly creates serious obstacles to the regulation of content.

相关规范指引尚需完善,发展与治理之间存在匹配问题。近年来,人工智能产业规范指引不断推出,治理体系初显格局,但随着科技进步加快,制度建设亦步亦趋也未必严丝合缝,这又引发了技术创新发展与政策支持、法律规制的匹配问题。一是产业发展需落实支持政策。2021年3月,我国十四五规划纲要出台,提出“打造数字经济新优势”的建设方针并强调了人工智能等新兴数字产业在提高国家竞争力上的重要价值。在规划纲要的指引下,面对人工智能生成内容关联产业——尤其是数字文化产业的迅速发展,中央政府相继出台了多项政策推动发展数字文化产业新型业态。2022年5月,最新出台的《关于推进实施国家文化数字化战略的意见》,要求研究制定扶持文化数字化建设的产业政策,强调各地要因地制宜制定具体实施方案,相关部门要细化政策措施。未来,各地、各部门政策的支持力度、推进落实和动态调整情况将决定着技术与社会的相互建构程度,将对AIGC技术在社会情境中的发展起到重要作用。二是AIGC可版权性有待厘清。当前,我国《著作权法》中规定,著作权的指向对象为“作品”。仅从法律文本来看,我国现行知识产权法律体系均规定法律主体为享有权利、负有义务和承担责任的人,因此非人生产的智能化内容难以通过“作品—创作—作者”的逻辑获得著作权的保护,这一观点获得了2019年北京互联网法院的判决支持。而在2020年腾讯公司诉网贷之家网站转载机器人自动撰写的文章作品一案中,深圳南山区法院认为在满足独创性要求的情况下,人工智能撰写的文章属于著作权保护的作品。法律概念的模糊引发司法裁判的翻转,导致AIGC作品存在着著作权归属不清的现实困境。这一问题不仅可能导致使用AIGC技术创作的作品无法获得著作权保护,阻碍人工智能技术发挥其创作价值,还有可能因人工智能的海量摹写行为稀释既有作品权利人的独创性,威胁他人的合法权益。三是新技术增加监管难度。近年来,随着人工智能技术不断成熟,机器深度学习后生成的内容愈发逼真,能够达到“以假乱真”的效果。相应地,应用门槛也在不断降低,人人都能轻松实现“换脸”、“变声”,甚至成为“网络水军”中的一员。由于契合民众“眼见为实”的认知共性,技术滥用后很可能使造假内容以高度可信的方式通过互联网即时触达用户,导致公众在观念博弈中判断失灵,难以甄别水军和虚假信息。而这又牵涉到一个现实的难题,那就是由于互联网提供的虚拟身份外衣和相关技术的发展,造假内容生产者具有分散性、流动性、大规模性和隐蔽性的特点,导致追踪难度和复杂性与日俱增,再加上规范指引的模糊和滞后,对于那些擦边球性质的造假行为存在难以界定的现实困境,这无疑对内容监管行动造成了严重阻碍。